Managing Agents, Not Programming Them

Managing Agents, Not Programming Them

Disclaimer: AI helped refine this post, and the ideas are mine. If you're worried about who typed each sentence, you're missing the point.

Lately I've been spending a lot of time playing around with agent orchestration systems - thinking about how they should be designed, where they work well, and where they seem to go wrong.

One thing I keep noticing is that when you ask AI for advice on how to orchestrate AI systems, it often defaults to a style of thinking inherited from the previous era of software (which makes sense given their training data). The outputs are usually clean, structured, and sensible on the surface: rigid schemas, tightly defined roles, explicit contracts, deterministic handoffs, carefully separated components.

That style made a lot of sense for traditional software. If your building blocks are brittle and non-intelligent, then reliability comes from constraining behaviour, narrowing interfaces, and trying to make the whole thing as predictable as possible.

But capable/intelligent agents seem to change the nature of the problem.

The more I work with them (and the better they get), the less they feel like ordinary software components and the more they feel like capable operators - able to reason, explore, improvise, revise, and sometimes find paths that were not specified in advance on a high level. And once that starts to feel true, a lot of the default architectural advice starts to look slightly off. Not exactly wrong, but too eager to impose structure too early.

That, at least to me, seems like one of the key failure modes in agent orchestration design. If you ask a model how to build an agent system, it will often give you something that looks like old-school software architecture with LLMs inserted into the boxes. That is to mean with roles sharply defined, interfaces narrow, transitions explicit, the whole thing looking neat and controllable.

But neatness is not the same as effectiveness.

I saw this directly in a project of my own. I initially designed it as a map of specialist agents, each with a tightly scoped role and cleanly separated responsibility. It looked organised, but it was too restrictive in practice - the agents were boxed in before they even had the chance to reason broadly.

I eventually redesigned the system around a simpler default: start with the minimum number of agents (one, actually!), give them rich context and tools, and push their capabilities first. Only split responsibilities and introduce new interfaces when that becomes genuinely necessary. That shift alone reduced the number of agents dramatically and improved outcomes.

In practice, too much imposed structure can reduce the very thing that makes agents useful in the first place: optionality. It can make the system more fragile, more over-optimised, and less able to benefit from the flexibility of the underlying intelligence. Instead of enabling exploration, it starts to police it.

What seems more promising is a different default: give agents a clear objective, rich context, access to tools, access to memory, and room to explore. Keep constraints, but apply them at the right level - guardrails around budgets, permissions, risk, and evaluation, without trying to prescribe every intermediate step in advance.

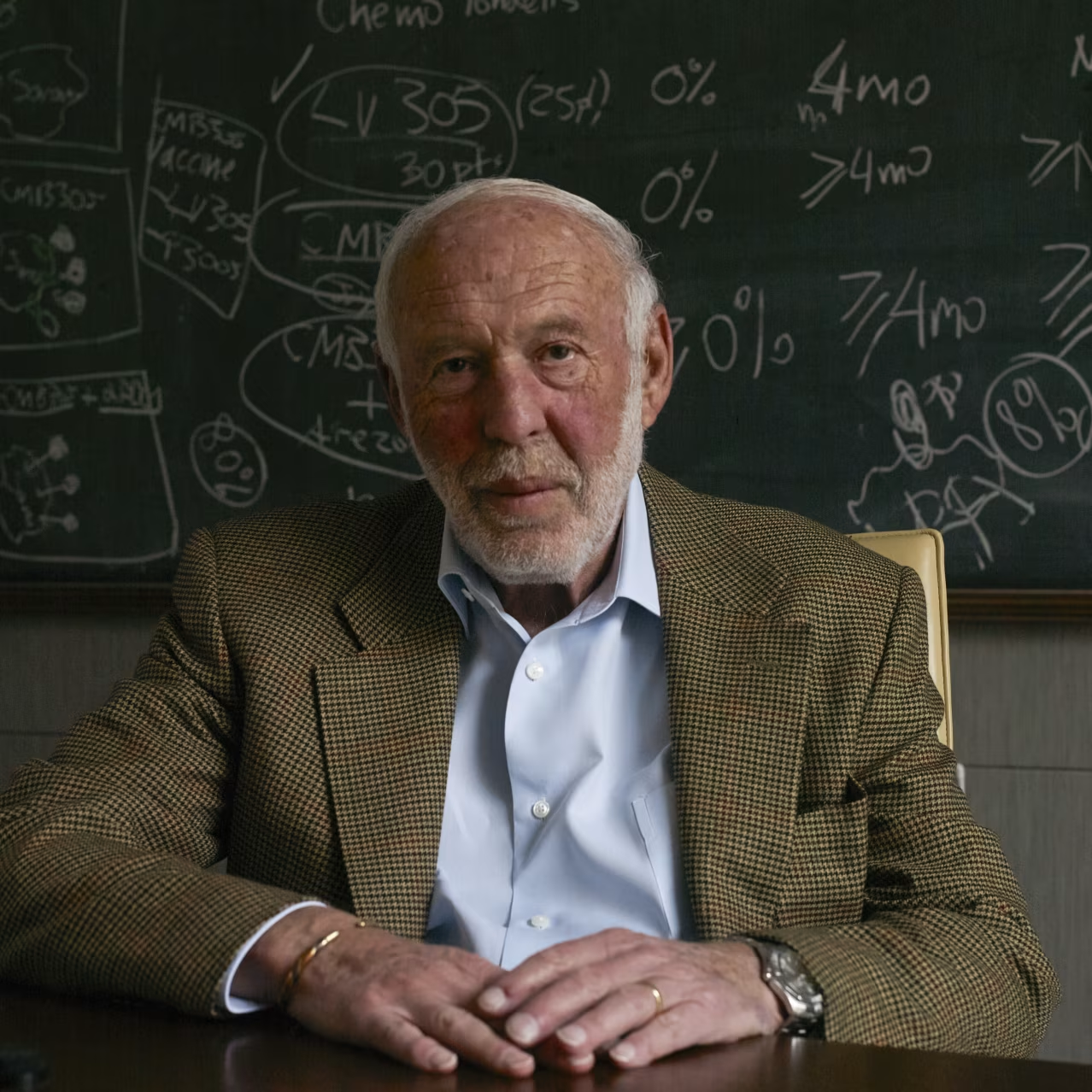

As agent systems become more capable, the design problem seems to shift - away from hand-stitching together deterministic, error-proof workflows, and toward managing intelligent but fallible actors. This framing isn't new, and it maps cleanly onto something Jim Simons said about managing human talent at Renaissance:

"You get smart people together. You give them a lot of freedom. Create an atmosphere where everyone talks to everyone else. They're not hiding in a corner with their own little thing. They talk to everybody else. And you provide the best infrastructure, the best computers and so on that people can work with. And make everyone partners." - Jim Simons

And I don't mean the absence of structure, but rather structure that supports intelligence rather than suffocates it. Once you frame agent design the same way, risk management becomes central. You stop assuming the goal is to eliminate error entirely, because that's not really how you work with capable beings. The goal becomes making errors legible, recoverable, bounded, and correctable over time.

None of this is revolutionary, which is maybe reassuring. We already know how to manage talented but imperfect people. We already know that autonomy can outperform over-control when capability is high enough, and that good infrastructure, good feedback loops, and good communication often matter more than micromanagement. What seems new is that software itself is starting to move into that territory.

If that is right, then the next era of software development may look less like assembling deterministic systems and more like managing small populations of intelligent workers - not perfect workers, but capable ones. The job of the designer is then not to force them into rigid boxes, but to create an environment in which useful work can emerge reliably enough, safely enough, and with enough room for intelligence to matter.

The best agent systems may end up looking less like pipelines and more like well-run research groups: autonomous actors, shared context, good tools, strong feedback loops, and a clear sense of what matters.